Events

Date: 14.08.2019

Contact Person: Marius Kloft

Date: 09.02.2023

Contact Person: Marius Kloft

Abstract: Self-supervised learning has emerged as a powerful paradigm for machine learning, especially for drawing insights from unlabeled data. The key idea is to introduce auxiliary prediction tasks and to train a deep model to solve these auxiliary tasks. If the tasks are designed well, the trained model will be useful for a number of purposes such as anomaly detection, feature extraction, and forecasting. Unfortunately, most successful approaches for SSL rely on domain-specific indictive biases and are therefore limited to individual use-cases. In this talk, I present advanced self-supervised learning losses that facilitate domain-general self-supervised learning beyond images and text. Exponential family embeddings, for example, generalize word embeddings to provide insight into a wide range of applications. They are a useful tool for studying zebrafish brains in neuro science, for studying shopping behavior in economics, or for studying language evolution in computational social science. Similarly, neural transformation learning (NTL), is a new general-purpose tool for self-supervised anomaly detection. While related methods in computer vision typically require image transformations such as rotations, blurring, or flipping, NTL automatically learns the best transformations from the data and therefore generalizes self-supervised AD to almost any data type.

Date: 13.02.-17.02.2023

Contact Person: Marius Kloft

Date: 04.05.-05.05.2023

Contact Person: Marius Kloft

Date: 13.11.2023

Contact Person: Marius Kloft

Date: 22.04.-26.04.2024

Contact Person: Jakob Burger

Date: 13.05.-16.05.2024

Contact Person: Daniel Neider

Date: 21.05.2024

Contact Person: Marius Kloft

Date: 23.05.2024

Contact Person: Marius Kloft

Date: 23.05.-24.05.2024

Contact Person: Marius Kloft

Date: 07.10.-08.10.2024

Contact Person: Marius Kloft

Date: 09.10.2024

Contact Person: Marius Kloft

Date: 18.03.-26.03.2025

Contact Person: Marius Kloft

Date: 12.06.-13.06.2025

Contact Person: Marius Kloft

Date: 09.07.2025

Contact Person: Marius Kloft

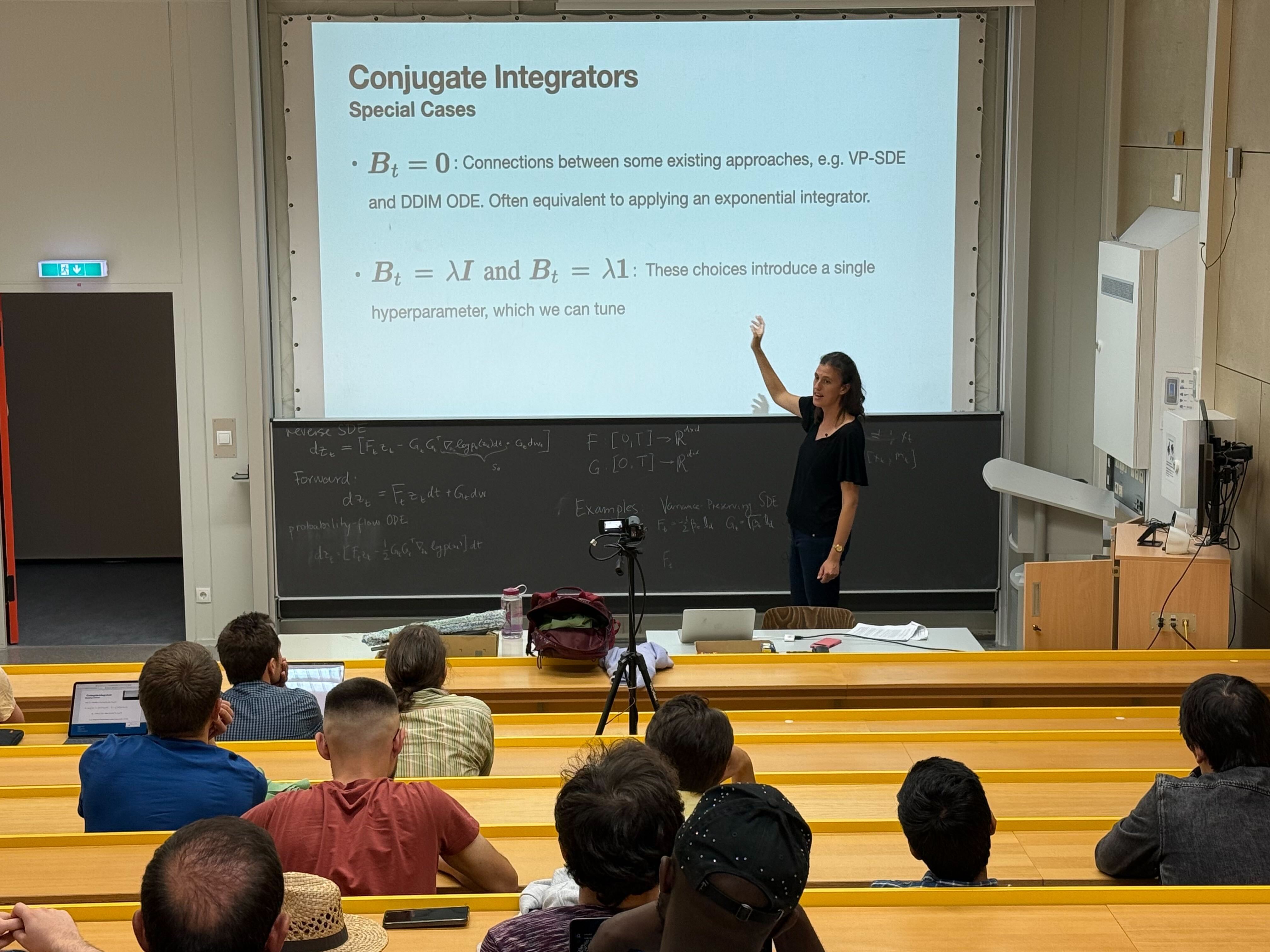

Abstract: Diffusion models have shown remarkable results in generative modeling, but they come with a major drawback: slow sampling at inference time. In this talk, I’ll present two complementary strategies to accelerate sampling in pre-trained diffusion models — Conjugate Integrators and Splitting Integrators. Conjugate integrators generalize DDIM by mapping the sampling dynamics to a space where they can be solved more efficiently. Splitting integrators, inspired by techniques from molecular dynamics, reduce numerical errors by alternating updates between data and auxiliary variables. After exploring the theoretical foundations and empirical performance of both methods, I’ll introduce a hybrid approach that combines their strengths. When applied to Phase Space Langevin Diffusion on CIFAR-10, our method outperforms baselines in both speed and sample quality, achieving high-quality samples in fewer steps.

Date: 11.08.-15.09.2025

Contact Person: Marius Kloft

Abstract: Artificial Intelligence has become ubiquitous in modern life. This „Cambrian explosion“ of intelligent systems has been made possible by extraordinary advances in machine learning, especially in training deep neural networks and their ingenious architectures. However, like traditional hardware and software, neural networks often have defects, which are notoriously difficult to detect and correct. Consequently, deploying them in safety-critical settings remains a substantial challenge. Motivated by the success of formal methods in establishing the reliability of safety-critical hardware and software, numerous formal verification techniques for deep neural networks have emerged recently. These techniques aim to ensure the safety of AI systems by detecting potential bugs, including those that may arise from unseen data not present in the training or test datasets, and proving the absence of critical errors. As a guide through the vibrant and rapidly evolving field of neural network verification, this talk will give an overview of the fundamentals and core concepts of the field, discuss prototypical examples of various existing verification approaches, and showcase how generative AI can improve verification results.

Date: 17.09.2025

Contact Person: Marius Kloft

Abstract: Diffusion models have transformed generative modeling in various domains such as vision and language.

But can they serve as tools for scientific inference? In this talk, Stephan Mandt presents

a perspective that reframes diffusion models as Bayesian solvers for scientific inverse problems

– involving a noisy measurement process – with applications ranging from climate modeling to astrophysical imaging.

Scientific use cases demand more than photorealism – they require calibrated uncertainty, distributional fidelity,

efficient conditional sampling, and the ability to model heavy-tailed data.

He’ll highlight four recent advances developed to meet these needs:

1. Variational Control, an improved framework for conditional generation in pretrained diffusion models (ICML ’25)

2. Heavy-Tailed Diffusion Models, for enabling accurate modeling of sparse and extreme-valued scientific data (ICLR ’25)

3. Conjugate Integrators, for enabling fast conditional sampling without retraining (NeurIPS ’24)

4. Generative Uncertainty for Diffusion Models, for assessing and exploiting epistemic uncertainties in data generation tasks (UAI ’25)

Date: 26.02.2026

Contact Person: Marius Kloft